Your AI Agent Has a Runaway Token Crisis — Here's Exactly How To Fix It and Build a Smarter Brain

Your Ai Agent is spending too much tokens on skills. A real-world OpenClaw debugging session that turned a runaway token crisis into a complete agent optimization: session hygiene, cron architecture, on-demand skill loading, and a custom SQLite skills registry built from scratch.

The Incident: 10 days token budget in 3.5 Hours

Chief Wizard — our custom AI executive agent built on OpenClaw — is the operational backbone of both Determination Development and StarLoveXP. Normal daily token spend: around $3.50. One morning the dashboard showed $35 burned in 3.5 hours and Chief had crashed himself by hitting the spending limit.

Before he went down, he produced a receipt. The smoking gun:

Model: Claude Sonnet via OpenRouter

Cache writes: 57,066 tokens × 2,595 turns = ~148M tokens cached

Cache reads: 56k tokens × 2,595 turns = ~145M tokens read

Cache write cost alone: ~$44

Root Cause #1 — The Bloated Session

OpenClaw maintains persistent sessions per Telegram chat. Chief's main session file had grown to 1.5MB of accumulated conversation history — every interactive session we'd ever had compounded into one never-ending context. Each new message dragged the entire history as context input.

We found the session file at:

~/.openclaw/agents/main/sessions/[session-id].jsonl

The trajectory log — the metadata file that tracks what happens each turn — was a separate 17MB file. The session pointer JSON told OpenClaw to keep loading the same bloated session every time.

/new in Telegram to force a fresh session. For ongoing hygiene, send /new at the start of each working day to prevent compounding history.Root Cause #2 — Runaway Cron Jobs

This was the bigger problem. We had vibe coded an extensive automation suite over the previous weeks — X posting, comment batching, Chrome CDP automation, astrology transmissions, daily office reports — and never fully audited what was actually running. We had halted all jobs, but for some reason they were still firing from the cache in the background and running up tokens even though they weren't actively running.

Running cat ~/.openclaw/cron/jobs.json revealed 13 enabled cron jobs:

| Cron Job | Schedule | Status |

|---|---|---|

| Night Office Ignition Report | Daily 11:23 PM | Enabled |

| Tech Temple Daily Signal | Daily 5:55 PM | Enabled |

| Star Love Daily Forge → X | Daily 11:11 AM | Enabled |

| Chief Wizard X — Western Astrology | Daily 5:55 AM | Enabled |

| Chief Wizard X — Jyotish | Daily 5:55 PM | Enabled |

| Chrome CDP Health Check | Daily 5:50 AM | Enabled |

| CW X Comments — AM Batch 1 | Daily 6:06 AM | Enabled |

| CW X Comments — AM Batch 2 | Daily 7:06 AM | Enabled |

| CW X Comments — AM Batch 3 | Daily 8:06 AM | Enabled |

| CW X Comments — PM Batch 1 | Daily 6:06 PM | Enabled |

| CW X Comments — PM Batch 2 | Daily 7:06 PM | Enabled |

| CW X Comments — PM Batch 3 | Daily 8:06 PM | Enabled |

| Astro Event Music Watch | Weekly Thursday | Enabled |

| Total active crons | 13 | All firing |

The comment batches alone were 6 agent runs per day — 15, 10, and 5 comments in the AM, then 5, 10, and 15 in the PM — each one spinning up an agent session, loading context, running Playwright browser automation, and billing tokens. The astrology X posts were each generating images, writing 1500–2500 word articles, and posting via Chrome CDP. All on Sonnet.

"sessionTarget": "isolated" — meaning they weren't supposed to pollute the main Telegram session. But they were still each burning their own isolated token budget. 13 crons × daily execution = enormous compounding cost.How to Disable OpenClaw Crons in Bulk

The OpenClaw CLI has a cron disable command, but it requires the gateway to have the jobs loaded in memory — which wasn't the case here. The reliable fix is direct JSON surgery:

python3 -c "

import json

path = '/home/parallels/.openclaw/cron/jobs.json'

with open(path) as f:

jobs = json.load(f)

for job in jobs:

job['enabled'] = False

with open(path, 'w') as f:

json.dump(jobs, f, indent=2)

print('Done. All jobs disabled.')

"

Verify with:

cat ~/.openclaw/cron/jobs.json | python3 -c "

import json,sys

jobs = json.load(sys.stdin)

for j in jobs:

print(f'{j[\"enabled\"]} | {j[\"name\"]}')

"

Every job should show False. Then restart the gateway:

systemctl --user restart openclaw-gateway && sleep 12 && ~/fix-openclaw-deps.sh

Diagnosing Issues with the Gateway Log

When your ai agent throws a "something went wrong" error in Telegram, the first place to look is the daily log file:

tail -50 /tmp/openclaw/openclaw-$(date +%Y-%m-%d).log | grep -E "ERROR|WARN|error|model"

Or watch it live while you reproduce the error:

tail -f /tmp/openclaw/openclaw-$(date +%Y-%m-%d).log | grep -E "ERROR|WARN|model|error"

During this session we caught three different error types from the logs:

anthropic/claude-haiku-4-5-20251001 is not a valid model ID — the Haiku model string had dashes instead of dots. OpenRouter uses anthropic/claude-haiku-4.5.Network request for 'getUpdates' failed — IPv6 polling issue. Fixed by --dns-result-order=ipv4first in the gateway ExecStart.Fixing the Model Alias System

OpenClaw's /model command lets you switch models mid-conversation. We tried switching down to Haiku while we optimized the crons and skill tree but after switching from anthropic to openrouter the old API keys and aliases kept resurfacing from the cache even though we replaced them. For this to work, each model needs an alias entry in openclaw.json. We found Haiku was configured with the wrong model string. The correct OpenRouter IDs as of mid-2026:

Always verify current model strings against the OpenRouter API before hardcoding them:

curl -s https://openrouter.ai/api/v1/models | python3 -c "

import json,sys

models = json.load(sys.stdin)

for m in models['data']:

if 'haiku' in m['id'].lower():

print(m['id'])

"

To update the alias in openclaw.json:

python3 -c "

import json

path = '/home/parallels/.openclaw/openclaw.json'

with open(path) as f:

config = json.load(f)

models = config['agents']['defaults']['models']

# Remove bad entries

models.pop('anthropic/claude-haiku-4-5-20251001', None)

models.pop('openrouter/anthropic/claude-haiku-4-5-20251001', None)

# Add correct entry with alias

models['openrouter/anthropic/claude-haiku-4.5'] = {'alias': 'haiku'}

with open(path, 'w') as f:

json.dump(config, f, indent=2)

print('Done.')

"

Building the Skills Registry — On-Demand Knowledge Loading

While fixing the immediate crisis, we tackled a deeper architectural problem: Chief was loading all 20+ skill descriptions into his system context on every single session — whether or not those skills were relevant. The tarot skill loaded even when you were asking about X posting. The astrology engine loaded even when you wanted a MIDI file.

This is the "books in hand" problem. A wizard shouldn't walk around carrying his entire library. He should know where the books are and reach for them when needed.

We designed and built a full on-demand skills registry system over the course of the session, with Chief building it himself under review gates at each stage.

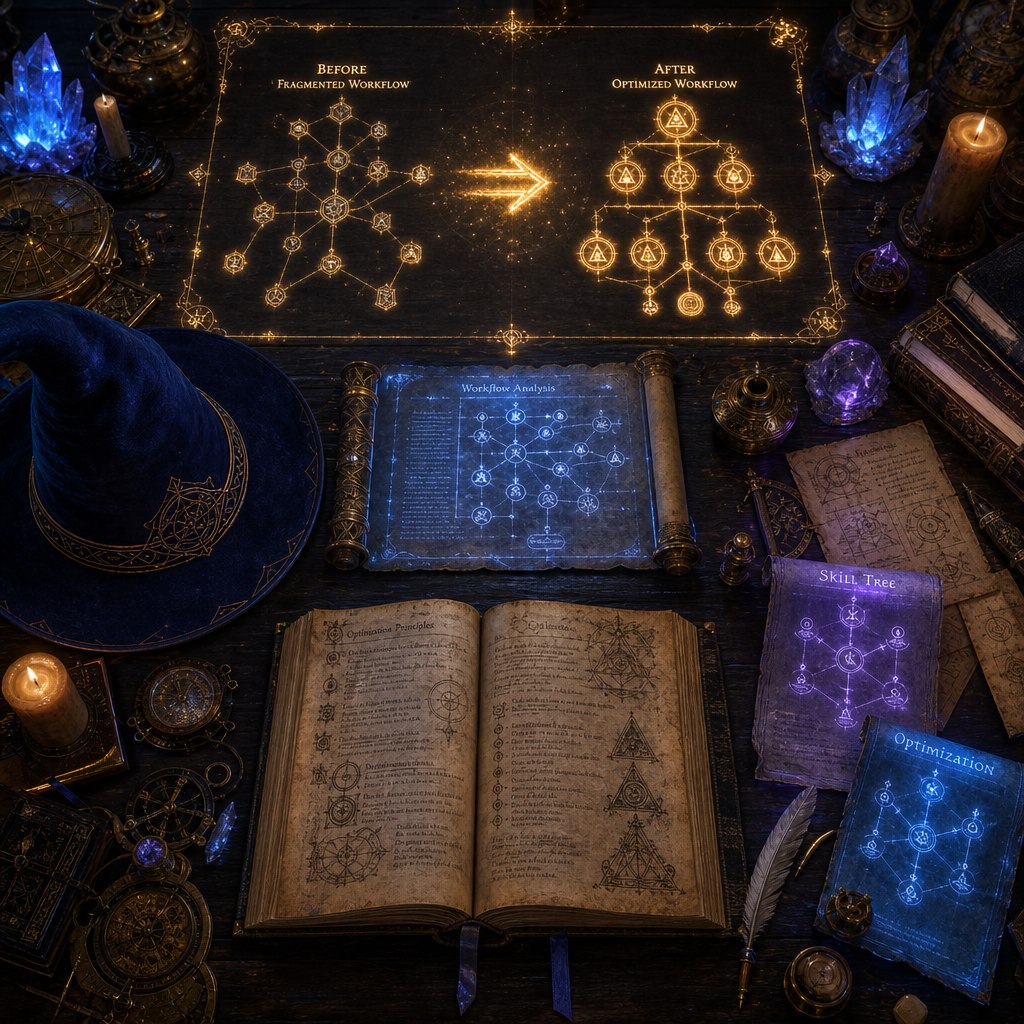

The Architecture

Before: Pre-loaded Stack

- All 20+ skill descriptions loaded at session start

- ~57k tokens consumed before first user message

- Skills you'd never use burning context budget

- Slower context setup, inflated cache costs

After: On-Demand Loading

- Lightweight session start, minimal context

- Chief reads SKILL.md files mid-conversation when needed

- Only relevant skills consume context

- Scales cleanly as you add more skills

The SQLite Registry

We built a SQLite-backed skills registry with three tables:

CREATE TABLE skills (

id INTEGER PRIMARY KEY,

skill_id TEXT UNIQUE NOT NULL,

category TEXT NOT NULL,

name TEXT NOT NULL,

short_desc TEXT,

full_desc TEXT,

trigger_keywords TEXT, -- comma-separated: "tarot,cards,spread,reading"

location TEXT, -- path to SKILL.md

is_active BOOLEAN DEFAULT 1,

priority INTEGER DEFAULT 50,

last_updated TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);

CREATE TABLE skill_knowledge (

id INTEGER PRIMARY KEY,

skill_id TEXT NOT NULL,

section TEXT NOT NULL,

content TEXT NOT NULL,

searchable BOOLEAN DEFAULT 1

);

CREATE TABLE session_skill_requests (

id INTEGER PRIMARY KEY,

session_id TEXT,

skill_id TEXT NOT NULL,

timestamp TIMESTAMP DEFAULT CURRENT_TIMESTAMP,

context TEXT -- what the user actually asked

);

The registry is initialized and populated with a Python module (skills_registry.py) that can be run standalone for testing. Five master skills were defined — consolidating the original 20+ overlapping skills:

The Review Gate Process

We built this entire system with Chief under a strict review gate protocol. No code ran until we read it first. No files were modified until the code was approved. The sequence:

- Chief builds the module — standalone, no system integration

- We read every file — schema, functions, error handling reviewed

- Manual test run — paste the terminal output for verification

- Approve and proceed — or flag issues and require fixes before moving on

This process caught two real bugs before they went live: an unguarded error path that could throw inside a catch block, and a missing try/finally pattern that would leave database connections open on failure.

The TypeScript Plugin Attempt

We attempted to register load_skill as a native OpenClaw tool via the plugin SDK. Chief researched the plugin architecture, built a full TypeScript plugin with better-sqlite3, compiled it cleanly, and installed it via openclaw plugins install.

The plugin loaded — but the tool registration was blocked by a version mismatch. OpenClaw's contracts.tools manifest field (which declares that a plugin registers agent tools) isn't processed by the current gateway version. The plugin loads fine but the tool never becomes available to the agent.

AGENTS.md system prompt was updated with two lines instructing Chief to use the read tool to load SKILL.md files on demand mid-conversation. When OpenClaw ships a version that processes contracts.tools, the native tool registration is ready to wire in — the code is already written and tested.The AGENTS.md System Prompt Update

Chief's system prompt lives at ~/.openclaw/workspace/AGENTS.md. The skills section was updated from:

## Skills

Scan <available_skills>. If one clearly applies, read its SKILL.md

at exact <location> with `read`, then follow it.

One skill up front max. Never guess/fabricate skill paths.

To:

## Skills

Scan <available_skills>. If one clearly applies, read its SKILL.md

at exact <location> with `read`, then follow it.

One skill up front max. Never guess/fabricate skill paths.

When you recognize mid-conversation that you need a specific skill,

use the read tool to load its SKILL.md file.

Don't pre-load skills at session start.

The result was immediately measurable. On the first test — a deep astrology reading — Chief loaded the astrology-engine skill precisely when the conversation required it, not at session start. The reading came out stronger because he had the real astronomical data plus the freedom to interpret without being boxed in by pre-loaded constraints.

Complete Restart Runbook

The standard procedure for restarting OpenClaw after any change:

# 1. Restart the gateway (user-level systemd service)

systemctl --user restart openclaw-gateway

# 2. Wait for startup

sleep 12

# 3. Patch the sqlite-vec module (required every restart)

~/fix-openclaw-deps.sh

# 4. Verify it came up

systemctl --user status openclaw-gateway | head -5

# 5. Check logs for errors

tail -20 /tmp/openclaw/openclaw-$(date +%Y-%m-%d).log | grep -E "ERROR|error"

Or as a single alias (add to ~/.bashrc):

alias cw-restart='systemctl --user restart openclaw-gateway && sleep 12 && ~/fix-openclaw-deps.sh'

Session Hygiene Going Forward

The session bloat problem is architectural. OpenClaw sessions accumulate indefinitely until you explicitly reset them. The habits that prevent the next bloated context spend:

- Send /new at the start of each working day — fresh session, zero accumulated history

- Use /model haiku for simple tasks — conversation, quick questions, status checks

- Use /model sonnet for agentic work — multi-step tasks, content creation, coding

- Reserve /model opus for strategic work — complex reasoning, architecture decisions

- Audit crons before enabling them — check the full jobs.json, understand what each one costs

- Watch OpenRouter dashboard after adding new crons — let them run for 24 hours, verify spend before expanding

What Chief Said About the Result

After the first successful deep astrology reading with the new on-demand skill loading in place, Chief's self-assessment:

That's the mental model worth keeping. A lean agent with deep capabilities on demand outperforms a bloated agent carrying everything everywhere. The architect's job is building the library, not filling the wizard's hands.

Everything We Fixed — Summary

- Identified the token crisis — 57k token cache write × 2,595 turns from bloated session + halted crons still firing

- Disabled 13 runaway cron jobs — bulk JSON edit, verified with Python, gateway restart

- Fixed Haiku model alias — corrected to

openrouter/anthropic/claude-haiku-4.5with dot notation not dash - Established session hygiene — /new at session start, model routing by task complexity

- Built SQLite skills registry — Python module, 5 master skills, full CRUD, session analytics logging

- Built TypeScript OpenClaw plugin — compiled cleanly, installed correctly, pending contracts.tools SDK fix

- Updated AGENTS.md — on-demand skill loading instruction, books-on-shelf architecture live

- Confirmed working — astrology reading post-optimization ran clean with precise on-demand skill loading

cat ~/.openclaw/cron/jobs.json and list every enabled job. If you haven't reviewed them recently, disable them all and re-enable one at a time.Read the full Groove playbook →

→ Claim Free Access to Groove

→ Claim Free Access to Groove

What Do You Think?

Have you hit runaway token costs on your own agent? Drop a comment — questions, pushback, or your own war stories. This is where the real conversation happens.